Identify Logical Components

In any IT system, three main components deal with information and data.

- Data actions

- Data objects

- Job to perform

Data objects represent logical constructs that show the data in use. Data actions refer to those commands which apply to multiple data objects to execute a task. The job to perform is the function that users want to fulfill as part of their organizational role.

When multiple systems combine into a single unified system, you need to identify the jobs to perform, data actions, and data objects for all individual systems.

You then need to implement all such components as modules inside the codebase, where one module or more will indicate each job to perform, data action, and data object. These modules are put into various categories for steps that will appear later.

It is easiest to find data object in the system that is in use. Taking this dataset, system architects can ascertain data actions and match them with jobs to be performed by system users. The codebase frequently is object-centric, and all code objects are linked with jobs to perform and functions.

System architects should pay heed to the following issues.

If applications make use of similar data, then can they merge this data?

What steps should they take when data fields are found to missing or different in similar objects?

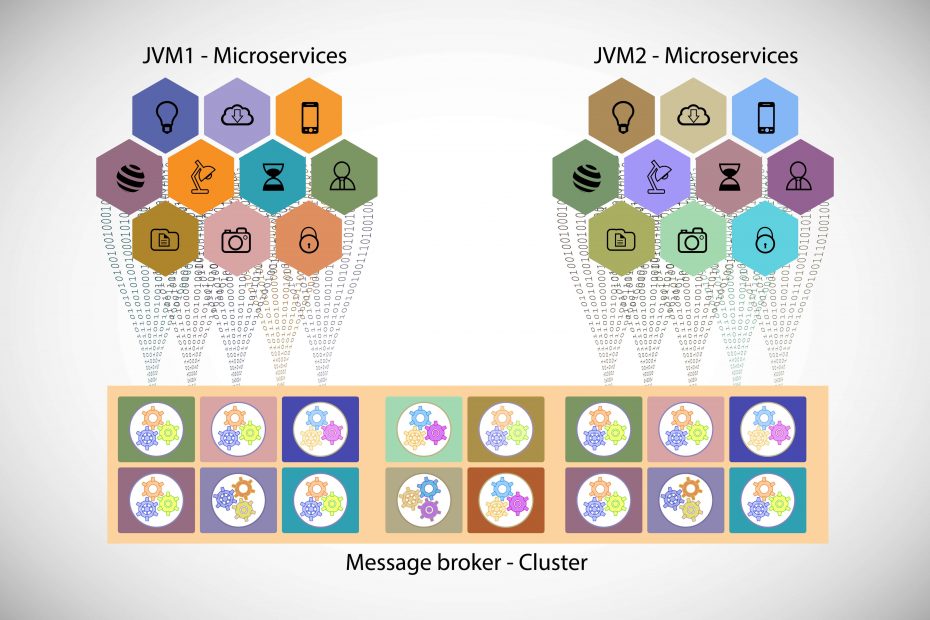

The movement from monolithic to microservices architecture does not directly impact the user interface. The components that are best suited for such migration are decided on various factors such as use frequency, number of users, performance speed, and dependencies on the remaining components.

Unlock the future of intelligent applications with our cutting-edge Generative AI integration services!

Organize Enterprise Microservices Architecture Components

When each module is identified uniquely and put into the appropriate group, you should organize these groups internally.

Components performing the same function should be identified and remediated before deploying the microservice architecture. When the microservice system is finalized, there should only be a single microservice performing it for any function.

Duplicate functions may likely be found where different monolithic applications are combined. It also shows where legacy code is added to an application.

The following steps have to be addressed when combining duplicate data and functions.

- Verify data accuracy

- Verify datatypes

- Check data formats

- Manage missing values and fields

- Identify outliers

- Verify data units

Any data piece should have just one data repository. This is one of the results of such migration. Hence, any replicated data should be scrutinized, and its representation should be determined. Subject to the job that must be done, the same data can possibly be represented differently.

There is also the possibility of getting similar data from various locations. The data could be coming to form various data sources. No matter how the data is put into use, determining the final representation of each data type is essential.

Find Component Dependencies

After identifying and organizing components for migration, system architects must look for dependencies between various components. Source code static analysis for finding calls between datatypes and libraries is one way of performing this step. Different dynamic analysis tools are available that analyze application usage patterns when executed to give a map showing components. This will reveal component dependencies.

SonarGraph Explorer is a tool that works for finding component dependencies. This tool gives a visual description of how each component is linked with different components within the codebase.

Find Component Groups

After identifying dependencies, system architects arrange components in the form of cohesive groups that may be turned into microservices. Here, the objective is to determine objects as well their related actions that should be separated logically in the resultant system.

Remote User Interface API

System users, the system, and its components rely on the user interface for communication. Hence, the interface must be designed for scalability. Care should be taken with the system design so that problems do not transpire when the system evolves.

The interface should be operable after the migration and during the migration as well. It will change when the monolithic system is changed to enterprise microservices architecture.

An important result of this endeavor is the unified API that applications and user interface utilized for handling data. A lot depends on such API. Hence it should be designed so that present data interactions do not change substantially. Rather, it should allow the inclusion of new actions, attributes, and objects as they are found and as they become available. When the API layer is working, new functions are put to work via this API rather than legacy applications.

API design and implementation is a key facet of successful change to microservices. The API should appropriately manage each data-access case used by applications that work with the API.

Care should be taken for API changes that affect backward compatibility. These should be dealt with to avoid deployment issues. There should be a mechanism in the API through which the application can look up the API version and issue warnings for incompatibilities. For the correct functioning of the microservices architecture, the API should provide data access to microservices. And during the transfer period, this can be done via the legacy application or macro services.

Small Disadvantaged Business

Small Disadvantaged Business (SDB) provides access to specialized skills and capabilities contributing to improved competitiveness and efficiency.

The API should have the right attributes to facilitate the scalability of the resultant system. So the API should be stateless, it must manage every data object in the system, and it should also have full backward compatibility with older versions.

The REST API is frequently used; it is not necessary for microservices.

Further information available at Microservices Agile Software Driving Business Value.