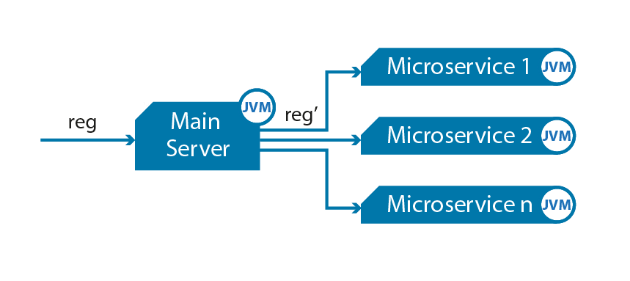

Microservice-based systems are gaining popularity in the industry. It is not strange given the potential of short development times, continuous deployment cycles, and a scalable, impactful component model for software systems. However, there is a downside. Things can quickly spiral out of control.

How do you maintain control as the number of microservices, messages, and interconnections between them grow? How do you handle the rollout of new and upgraded services? How do you keep your grasp on the system? How can you give your CEO any assurances about its behavior?

The solution is to use a toolset that enables you to accept the system’s complexity. Instead of evaluating and tracking technical behavior, focus on the behavior that matters: business outcomes. It enables you to comprehend the consequences of new implementations and failures at the appropriate level.

Unlock the future of intelligent applications with our cutting-edge Generative AI integration services!

The most difficult challenge for most businesses is integrating microservices with existing organizational systems. Most businesses will not simply throw out the old and substitute it with new – it just doesn’t sound right. So, can they both happily coexist?

Yes, but there is a significant learning curve. A microservices-based integration architecture necessitates the development of new trends for event-based interaction, micro gateways for regulation, and network-level controls that can be managed via a service mesh.

Using Service Mesh for Network-Level Interactions

One solution that has been developed to handle microservices in the cloud is service mesh. This facilitates network-level interactions. But what if your mesh was a collection of lightweight, versatile micro gateways managed by a centralized API management system? Then you would get the added advantage of a transparent microservice at the application level. To gain control, you could reuse and regulate them.

You can confidently implement and handle your apps with an API Management solution for centralized functioning of all your meshes, microservices, and APIs.

Without coding, you can establish context-specific guidelines for targeted and personalized user experiences. It allows you to accomplish the agility that microservices provide while avoiding the complexity associated with microservices architectures.

A service mesh enables developers to make adjustments without having to touch the application code. It allows developers to test functionality before implementation and ascertain the best way to move traffic through the system for different types of use patterns by mirroring and monitoring traffic on different versions of the same service.

Most tellingly, it provides automated methods of tracking what is unfolding between services at all instances, providing vital metrics that can aid in quickly determining the cause of breakdowns or performance issues.

Through permissions and authentication, a service mesh can also significantly improve safeguards in microservices-based development. When services communicate with one another, each must make sure that the others are who they claim to be. Your microservices are primarily communicating over an unencrypted open channel if you don’t use a security layer.

Authorization is also essential. Specific services in an app should communicate with one another, while others should not.

For instance, while a checkout service communicates with a payment service, the front-end service should not communicate with the payment service directly. Sustaining secure authorization is challenging if performed manually, but the operation can be personalized and automated when integrated into a service mesh.

Using Container Orchestration to Scale Up a Service

A containerization is a must-have tool for instantaneously scaling up a service using scripts. If a service is experiencing a high load, you must rapidly increase or reduce the number of containers that operate this service based on the load level.

Because managing containers is a difficult task, we suggest using orchestration. Docker, for example, has had an orchestration system built-in since the summer of 2016. Also, there are several Cloud and on-premise container grouping and orchestration options available today.

Using Cloud as a Reliable Infrastructure for Microservices

Microservices necessitate a dependable and scalable infrastructure. Most businesses prefer to use the cloud the majority of the time. Microservice infrastructure is typically deployed on Azure Service Fabric and Amazon EC2. Docker and other container APIs are available on all popular cloud platforms.

Going with Serverless

We must emphasize Serverless because it has altered the rules. For irregular operations or occurrences, most organizations use AWS Lambda or Azure Function. These tools aid in the integration of pipelines and message processing. When processing documents, for instance, you could send some text to the Function to derive tags and receive the results.

Use an API Gateway

Connecting a hundred microservices straight to each other demonstrates how difficult it is to manage security, assess traffic, establish reliable channels, and infrastructure scalability for high-load API calls.

API Gateway can help you avoid these issues and consolidate API management. Amazon API Gateway and Azure API Management provide a cloud gateway for developing, posting, maintaining, tracking, and securing APIs of any size. There is also a list of on-premise options, such as Mulesoft API Gateway.

Your microservices should only be aware of the API Gateway and communicate with one another through it; this way, you’ll have complete control over API calls.

Refactor Legacy Systems

API Gateway is particularly useful for substituting legacy systems with new ones or transitioning to a microservices architecture. The first thing that needs to be done here is to redirect any straight API calls to API Gateway.

As a result, each system will only be aware of the API Gateway and not of any other subsystems. The legacy infrastructure can then be easily split and changed one by one.

Small Disadvantaged Business

Small Disadvantaged Business (SDB) provides access to specialized skills and capabilities contributing to improved competitiveness and efficiency.

Use Enterprise Service Bus

An Enterprise Service Bus (ESB) consolidates asynchronous communications for inter-service interaction in the same way that API Gateway consolidates synchronous calls. In some cases, deciding between ESB and API Gateway is extremely difficult. This is because ESB can be used to recreate asynchronous calls by employing two queues, one for a departing request and the other for an inbound response.

API Gateway, on the other hand, can deliver an asynchronous call by establishing a waiting thread on both ends. A dependable and scalable message bus is provided by any mature cloud platform.

Final Word for How to Keep Complexity Out Of Microservices

While the microservices integration strategy provides developers with speed and flexibility, many are discovering the hard way that it also comes with a lot of complexity. Luckily, the complexity of microservices architecture can be overcome by following the recommendations provided above.

Contact us for services and solutions related to how to keep complexity out of Microservices.

Further blogs within this How to Keep Complexity Out Of Microservices category.